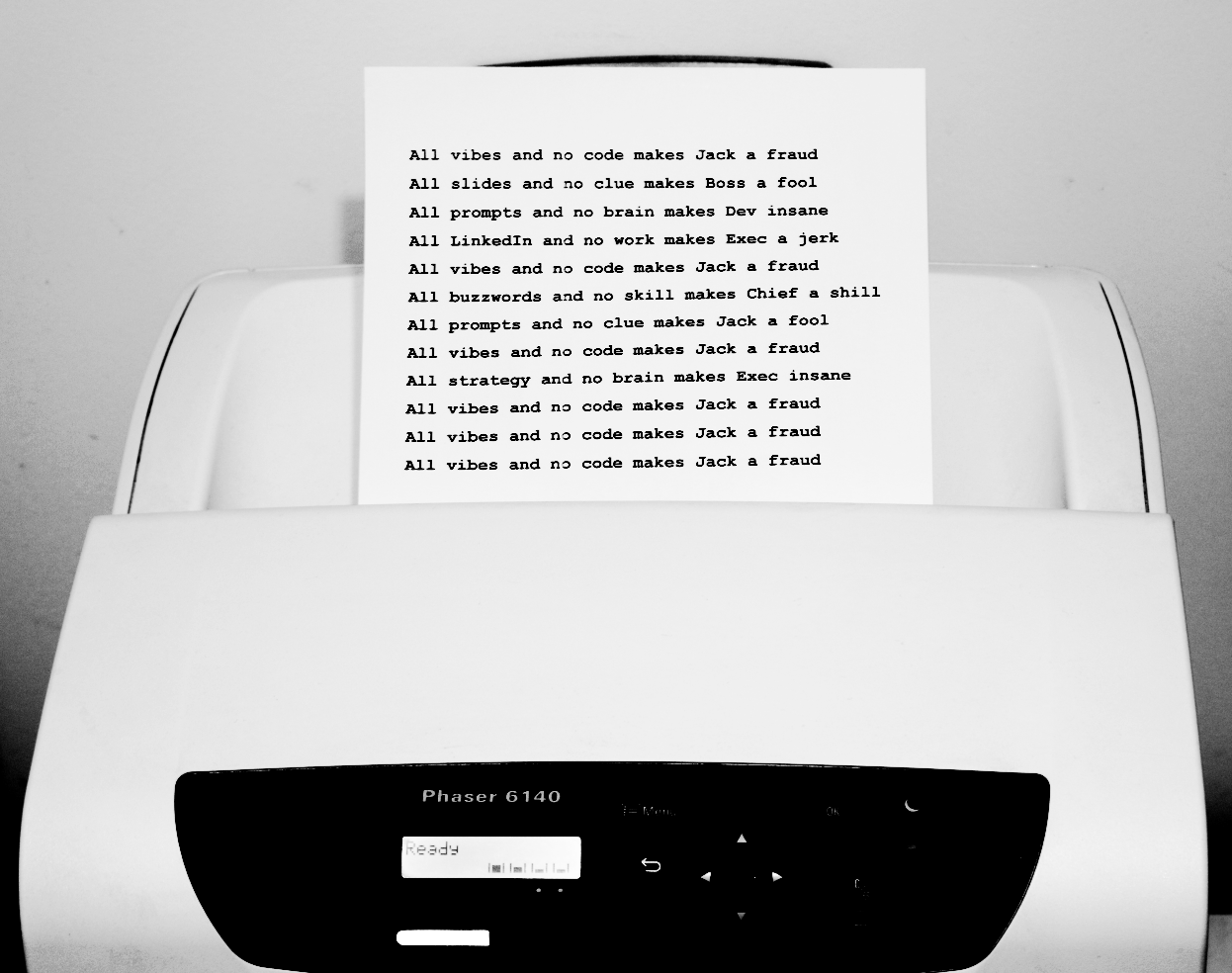

Vibe Coding: A Monkey Behind the Controls

Why AI Assistants Make Terrible Architects, and What Happens When We Forget

⚠️ Content Warning

This article contains uncomfortable truths about AI hype, the illusion of competence, and why your 200 PRs might be a liability. Side effects may include questioning your workflow and an urge to actually learn fundamentals.

I. The Physical World Problem

I've been using Claude since March 2024. For different things, half the time just to test its limits.

Here's what I've learned: AI knows nothing about the physical world.

It's only useful for "virtual things" - stuff that doesn't exist in physical reality. No atoms. No friction. No consequences that bleed into meatspace.

Code is one of those rare things. A pure human-invented abstraction. Logic gates all the way down. This is where AI shines.

And yet.

Here's the irony: we worry about LLMLLM (Large Language Model) is an AI system trained on vast amounts of text to generate human-like responses. ChatGPT, Claude, and similar tools are LLMs. They predict what words should come next based on patterns in their training data. hallucinations - models confidently stating false things.

But the bigger hallucination problem isn't in the models. It's in the activists.

Due to confirmation bias, AI evangelists hallucinate LLMs into being far more capable and useful than they actually are. They see what they want to see. They share the wins, bury the failures. They extrapolate from demos to revolution.

Yes, these are good tools. But here's the uncomfortable fact:

II. The 200 PRs Myth

Everyone's talking about the junior who shipped 200 PRsPR (Pull Request) is a proposed code change submitted for review. It's how developers share their work with the team before it becomes part of the main codebase. with Claude.

PULL REQUESTS

Nobody's asking how many got reverted.

Somewhere in the bowels of Silicon Valley, an engineer spent last month with zero IDE open, every line written by AI, and 200 pull requests shipped.

This is December 2025. Karpathy called it a "magnitude 9 earthquake" hitting the profession.

But here's the question nobody asks: What happens six months later?

- How many of those PRs introduced subtle bugs that only surface under load?

- How many created technical debt that someone else has to untangle?

- How many were "working" code that nobody actually understands?

Speed without understanding is just technical debtTechnical debt is the accumulated cost of shortcuts and quick fixes in code. Like financial debt, it compounds over time - the longer you ignore it, the more painful it becomes to fix. with extra steps.

III. Where Vibe CodingVibe coding is programming by describing what you want to an AI assistant and accepting its output with minimal review. Named for the 'vibes' - going with what feels right rather than deeply understanding the code. Works

Let's be fair. AI genuinely accelerates certain categories of work:

| Category | Why It Works |

|---|---|

| One-off scripts | Throwaway code. Errors don't compound. |

| BoilerplateBoilerplate is repetitive, standardized code that appears in many places with little variation. Like the legal text at the bottom of contracts - necessary but not unique. | Patterns repeated a million times. Nothing novel. |

| Prototypes | Proving a concept, not building for production. |

| "Make me this function" | Small, isolated, testable units. |

| Finding performant patterns | AI has seen thousands of implementations. Saves a Google rabbit hole. |

| Trivial refactoringRefactoring is restructuring existing code without changing what it does. Like reorganizing a messy closet - same clothes, better organization, easier to find things. | Mechanical transformations. Rename, restructure, reformat. |

For these tasks, AI isn't 10x faster - it's 100x faster.

Google and StackOverflow are being replaced by Claude Code. That part of the job is effectively solved.

But there's a critical caveat:

AI doesn't replace understanding. It replaces hours of searching, tedious boilerplate, and trivial refactoring. The thinking is still your job.

IV. Where It Falls Apart

Now for the uncomfortable part.

| Category | Why AI Fails |

|---|---|

| System architectureSystem architecture is the high-level structure of software - how components connect, communicate, and work together. Like the blueprint of a building: you can change the furniture (code details) easily, but moving walls (architecture) is expensive. | Requires understanding the whole. AI sees functions, not systems. |

| Complex state managementState management is how an application tracks and updates data as users interact with it. Like keeping score in a game - the system must remember what happened and respond correctly to new actions. | Emergent behavior across components. AI can't hold it all. |

| Deep debuggingDebugging is finding and fixing errors in code. Deep debugging means tracking down subtle, hard-to-reproduce problems that require understanding how the entire system works - not just reading error messages. | Requires mental model of actual execution. AI guesses. |

| Security | AI makes confident-sounding mistakes. In security, that's catastrophic. |

| Cross-file ripple effects | One "quick fix" touches 50 files. AI doesn't see the graph. |

| Innovation | See next section. |

The AI doesn't hold the whole system in its head. You do.

Or at least, you're supposed to.

V. The Copycat Problem

Why AI Cannot Innovate

AI doesn't think. It pattern-matchesPattern-matching is finding similarities between new input and previously seen examples. Like recognizing a song from the first few notes - useful, but not the same as understanding music theory or composing something new..

Everything an LLM "knows" is something a human already wrote, said, or built. It can recombine. It can synthesize. It can find similarities across domains.

But it cannot ask:

- "Why do we do it this way?"

- "What if every assumption is wrong?"

- "What hasn't been tried?"

These questions require something AI fundamentally lacks: skin in the game.

Innovation comes from frustration, curiosity, stubbornness. From waking up at 3am with an idea. From the irrational conviction that everyone else is wrong.

AI has none of this. It's a very fast, very comprehensive copycat.

| What AI Does | What Innovation Requires |

|---|---|

| Recombines existing patterns | Questions why patterns exist |

| Optimizes within constraints | Challenges the constraints themselves |

| Gives safe, consensus answers | Takes risks on unpopular ideas |

| Trained on the past | Imagines futures that don't exist yet |

Good at what's been done a thousand times. Useless for what's never been done.

The Popularity Paradox:

Here's something that surprised me: LLMs struggle with Unix terminal programming. Writing a terminal emulator from scratch. Epoll. Terminal character handling. PTY internals.

This is 40+ year old technology. Fully documented. Open source. Man pages exist. POSIX standards exist.

And yet, LLMs fumble it. Why?

| What exists | What's in training data |

|---|---|

| Man pages, POSIX standards | "How to center a div" × 1,000,000 |

| Terminal source code | React tutorials × 500,000 |

| epoll documentation | "My journey learning JavaScript" × ∞ |

Maybe 500 people in the world write terminal emulators. 5,000,000 people write React components.

LLMs know what's talked about, not what's fundamental.

Old and foundational ≠ well understood. Popular and shallow = well understood.

This extends beyond coding. LLMs are most capable in domains with the most online chatter - not the most documentation, not the most rigor, not the most importance. Just the most noise.

Innovation comes from engineers, not models.

The Compiler Illusion

In February 2026, Anthropic published a blog post about 16 Claude instances autonomously building a C compiler in two weeks for $20,000. LinkedIn exploded. "GCC took 37 years and thousands of engineers. AI just did it in 2 weeks." The "Zero Delay Economy" had arrived, apparently.

Except it hadn't. Here's what the headlines didn't mention:

- The resulting compiler generates code less efficient than GCC with all optimizations turned off

- It lacks a 16-bit x86 backend - and literally calls out to GCC for that part

- It has no working assembler or linker of its own

- The author, Nicholas Carlini, is an Anthropic employee - this was a product demo for their new model

- Carlini himself admits the compiler has "nearly reached the limits" of the model's abilities

But here's the real point: LLMs have seen compiler source code. Vast amounts of it. GCC, LLVM, TCC, Tiny C Compiler, countless compiler textbooks, university courses, blog posts, and Stack Overflow threads - all in the training data. The model didn't invent a compiler. It recombined patterns from every compiler it had already ingested.

A 2025 study by Cooper et al. (Stanford, Cornell, WVU) demonstrated just how deeply this memorization goes. They showed that LLMs like Llama 3.1 70B have memorized entire copyrighted books so thoroughly that you can extract them chapter by chapter from just a single-line seed prompt. Harry Potter, 1984 - near-verbatim, deterministically. The model isn't understanding these works. It's storing and replaying patterns.

The same mechanism applies to the compiler: pattern retrieval dressed up as engineering. Impressive? Yes. "Replicating 37 years of work"? Not even close. It's the copycat problem at industrial scale - with a marketing department attached.

VI. The Translation Illusion

There's a widespread expectation that LLMs can translate books, films, and music. This is wrong.

What LLMs do is word replacement - swapping source language tokens for statistically probable target language equivalents. This is not translation. Not in any meaningful sense of the word.

Translating a literary work means carrying over the author's voice, rhythm, cultural context, wordplay, subtext, and emotional weight into another language. It means making choices that have no "correct" statistical answer - because the translator must understand what the author meant, not just what the author wrote. A pun that works in English might need to become an entirely different joke in Estonian. A cultural reference that resonates in Japanese might need a completely different anchor in French. A sentence's rhythm might matter more than its literal meaning.

LLMs don't make these choices. They don't understand meaning. They perform high-speed pattern substitution based on what appeared most often next to what in the training data. The output often looks like a translation - grammatically correct, superficially fluent - which makes the illusion even more dangerous.

This is exactly the same problem as vibe coding: the output looks plausible, so people assume it's correct. But plausible is not the same as good, and fluent is not the same as faithful.

As discussed in the previous section, Cooper et al. demonstrated that LLMs memorize training data so thoroughly that entire books can be extracted verbatim. The model isn't "understanding" these books. It's storing and regurgitating patterns. The same mechanism that lets it spit back Harry Potter verbatim is the mechanism behind its "translations": pattern retrieval, not comprehension.

VII. The Monkey Behind Controls

Here's the scenario that keeps me up at night:

A vibe coder who can't actually program. They prompt. They accept. They ship. The code looks fine. The tests pass (the ones AI wrote, anyway).

Six months later, something breaks. Not a simple bug - a deep architectural flaw. The kind that requires understanding why the system was built this way.

The vibe coder stares at the screen. They don't understand what they shipped. They never did.

The scary part? They won't realize it until productionProduction is the live environment where real users interact with real data. When something breaks in production, actual customers are affected - it's not a drill. is on fire.

This isn't hypothetical. It's already happening. The failure modes just haven't surfaced yet at scale.

When they do, we'll discover how much "AI-assisted development" was actually "AI-dependent cargo culting."

VIII. The Structure That Never Forms

When you think through something yourself - whether it's code, a strategy, or a system design - something happens in your head.

A structure forms. A paradigmA paradigm is a mental framework for understanding how things work. Once you have one, new information slots into place naturally. Without it, each new problem is like starting from scratch..

It's not just "knowing the answer." It's having a mental architecture that lets new information flow through it. You own the work in a way that's hard to articulate. When someone asks "why did you do it this way?" - you know. Not because you memorized it, but because you built it.

When AI does the work:

The structure forms somewhere else. In the model. In the weights. In a place you cannot access or retrieve in any understandable form.

You get output. You don't get understanding.

| You wrestle with the problem | AI generates output |

|---|---|

| Structure forms in YOUR head | Structure stays in the model |

| You own the paradigm | You rent the result |

| New problems flow through your mental model | Each new problem starts from zero |

| "Why this way?" → You know | "Why this way?" → You don't know |

For developers:

A lawyer who has manually worked through hundreds of contracts develops pattern recognition they can't even articulate - intuitions about where things break, what's missing, what's subtly wrong.

Same with engineers. Debug enough systems, and you develop a sense. "This feels wrong." "Check the state management." "I bet it's a race condition."

That sense doesn't come from reading code. It comes from wrestling with code. From being stuck. From the 3am frustration that finally resolves into understanding.

AI skips the wrestling. Which means it skips the structure.

For executives:

A manager who lets AI write the strategy doesn't own that strategy.

When the board asks "why this direction?" - they don't know. When circumstances change and the strategy needs adapting - they can't adapt it. They have a document, not a paradigm.

It happens when the structure lives in you.

The uncomfortable truth:

You can't retrieve the structure from AI. You can only retrieve output. And output without structure is just text - plausible-looking, confident-sounding text that you have no way to evaluate, extend, or defend.

IX. The Decay

Here's what nobody talks about: expertise doesn't just fail to grow. It actively erodes.

When you stop wrestling with details, the mental structures that let you evaluate outputs start to fade. Days without hands-on work, and your judgment gets fuzzy. Weeks, and the paradigms start dissolving.

Now combine that with a critical fact: LLMs are non-deterministicNon-deterministic means producing different outputs from the same input. Unlike a calculator that always gives the same answer, LLMs can respond differently each time you ask the same question.. Slightly different output every time. Same prompt, different result.

Without solid mental models to evaluate against, you're not directing the process. You're just accepting whatever the machine happens to produce. You're trailing behind the output, not leading it.

| With expertise | Without expertise |

|---|---|

| You evaluate LLM output against mental models | You accept what looks plausible |

| You catch subtle errors | Subtle errors ship to production |

| You direct the process | The process directs you |

| AI accelerates your judgment | AI replaces your judgment |

The orchestra metaphor:

AI evangelists love saying "you'll become a conductor, orchestrating AI outputs."

But a conductor who has never played an instrument makes a poor conductor. The baton means nothing without the years of practice behind it.

And if you replace all the musicians with AI, what exactly are you conducting?

The uncomfortable pattern:

I see it all the time now. The way people talk about these tools. Where to get it. How cheaply. As long as it feels good. It becomes the main topic - everything else fades into the background.

The usage pattern looks uncomfortably like addiction.

There's only continuous practice - or gradual decline.

X. The Procurement Manager Problem

We've seen this movie before.

It's called "outsourcing expertise."

Companies and public sector organizations are full of procurement managers who lost domain expertise years ago. They used to have engineers who understood what they were buying. Then they "optimized" those roles away.

Now they waste client and taxpayer money buying nonsense - because they can't evaluate what they're buying anymore.

They drift along the procurement process. RFP in, vendor out. Checkboxes checked. Nobody asks if the thing actually works, because nobody understands it well enough to ask.

Some don't even realize their work has become essentially corrupt - not in the bribery sense, but in the systemic failure sense. Process without understanding. Motion without progress.

They'll push code through the pipeline. They'll hit their metrics. And they'll have no idea whether what they shipped actually works until it catastrophically doesn't.

It's irresponsible to claim we won't need programming skills in the future. We're not automating expertise. We're laundering its absence.

XI. A Note to Executives

The Magical Thinking Problem

There's a pattern I keep seeing, all the way up to the C-suite: people who refuse to accept the reality of what LLMs can and cannot do. They keep experimenting on their own, vibe-coding away, convinced that if they just find the right prompt - the clever trick, the magic words - they'll get results that others can't. This applies to code, to strategy documents, and especially to translation.

This is psychologically fascinating. People who can't write code and can't translate languages are also unable to evaluate whether the output is good. They see grammatically correct text and assume it's a quality translation. They see working code and assume it's good architecture. Dunning-Kruger effect at industrial scale.

And this "secret prompt" mentality is essentially magical thinking - the belief that the right incantation will produce a different result. It's the same mechanism that makes people believe they've found a system at the casino that nobody else has discovered. The model doesn't become better because you ask cleverly. The model's ceiling is the model's ceiling.

At the C-suite level, this is particularly dangerous, because these people have the power to make decisions based on this illusion. They lay off juniors. They buy AI licenses. They present LLM output as if it were their own strategy. And nobody below them dares to say the emperor has no clothes, because the boss is too enchanted with their new toy.

The result is an organizational feedback loop: the person with the least ability to evaluate AI output has the most authority to act on it, and the people who could actually spot the problems are either silenced or already let go.

For managers and executives who don't write code:

Keep your fingers off the keyboard.

If you're hoping AI will do your thinking for you - or give you expert advice in domains where you're not an expert yourself - stop.

Here's what will happen:

- You will annoy everyone above and below you.

- You won't understand what you're producing.

- You'll put LLM-formatted documents in front of people, full of details you never wrestled with yourself.

- People will notice. They always do.

The result? Intellectual DDoSDDoS (Distributed Denial of Service) is an attack that floods a system with traffic until it crashes. 'Intellectual DDoS' means overwhelming people with AI-generated content they can't process or evaluate. - up and down the org chart.

You lack the mental structures. You lack the paradigm. You're just dragging along behind the LLM process, pretending you understand.

The daily #AI posts on LinkedIn are living proof. Confident-sounding word salad from people who've never wrestled with the details they're pontificating about.

What you CAN use it for:

| Good Use | Why It Works |

|---|---|

| "How do I write this Excel macro?" | You know Excel. You can verify the output. |

| "How do I automate this in Word?" | You use Word daily. You'll see if it's wrong. |

| "What's the shortcut for X?" | Trivial lookup. Zero risk. |

| Drafting emails you'll actually edit | You know your context. AI doesn't. |

This is the same way developers use AI - for tools you work with daily, where you understand the output and can verify it works.

XII. The Cope Playbook

Objections I've heard before you typed them

🤖 "AI will replace programmers by 2030"

People have been predicting the death of programming since COBOL. Every generation of tooling was supposed to make programmers obsolete. Instead, we have more programmers than ever, working on harder problems. AI will change what programmers do. It won't eliminate the need for people who understand systems.

🤖 "The 10x productivity boost is real"

For certain tasks, yes. For boilerplate, scripts, lookups - absolutely. But 10x at the wrong thing is just faster failure. If you don't know what you're building or why, accelerating it isn't a boost. It's a liability.

🤖 "Junior devs with AI are outperforming seniors"

At what? Shipping PRs? Sure. Building maintainable systems? Debugging production issues? Making architectural decisions? The jury's still out - and the jury is a production outage at 3am when nobody understands the code.

🤖 "You're just afraid of being replaced"

I'm afraid of systems nobody understands. I'm afraid of technical debt laundered as productivity. I'm afraid of an industry that confuses motion with progress. If AI could actually replace me, I'd learn something else. I've done it before.

🤖 "AI is getting better every month"

Yes. And it's still fundamentally a pattern-matcher trained on past data. Getting better at recombining the past doesn't give you the ability to imagine the future. Faster horses are still horses.

XIII. The Leak Waiting to Happen

Here's what's happening right now in thousands of companies:

- Employees paste confidential code into ChatGPT to "fix a bug"

- Managers feed strategic documents into Claude to "summarize"

- Executives connect Slack, email, and CRM to AI assistants for "automation"

- Developers push proprietary algorithms through Copilot

All of this data flows somewhere. Gets stored somewhere. Gets trained on - maybe.

| What they're feeding | What could leak |

|---|---|

| Slack messages | Internal politics, strategy discussions, HR issues |

| Email threads | Client negotiations, pricing, legal matters |

| Code repositories | Proprietary algorithms, API keysAPI keys are secret passwords that allow software to access external services. If leaked, attackers can use your accounts, run up charges, or access your data. Like leaving your house keys under the doormat - convenient but dangerous., security logic |

| Documents | M&A plans, financials, product roadmaps |

The question nobody's asking:

What happens when one of these AI providers has a breach? Or a rogue employee? Or a subpoena?

Your "productivity boost" just became discovery material.

And here's the governance problem: shareholders have no idea this is happening.

No board approval. No risk assessment. No disclosure. Just thousands of employees individually deciding to feed company secrets into third-party AI systems - because it's "more productive."

When the exposure hits - and it will - nobody will be able to say they were informed. Because they weren't.

XIV. The Real Hallucination

Here's the irony nobody's talking about.

Executives are complaining about "AI hallucinations" - models confidently stating false things. They're not even that worried, really. Just grumbling while trying to spin the mess into something positive.

Meanwhile, those same executives are hallucinating harder than any LLM ever could:

| Executive Hallucination | Reality |

|---|---|

| "AI will replace programmers" | We'll need more engineers who actually understand systems |

| "Juniors are obsolete now" | You just blocked your talent pipeline |

| "We only need seniors + AI" | Where do you think seniors come from? |

| "10x productivity means 10x fewer engineers" | 10x productivity means 10x more ambitious projects |

The historical mistake happening right now

Companies have blocked juniors from entering the industry. "We need 5 years experience minimum. AI will handle the rest."

So who becomes senior in 5 years?

Nobody.

You're not replacing juniors with AI. You're destroying your own talent pipeline while hallucinating that the problem is solved.

Bad leadership does - and then blames the tools.

XV. Same Old, Same Old

Here's a prediction: some chip company - maybe NVIDIA, maybe someone else - will ship an on-premise AI box. Smaller than a rack server. About the size of a DVD player. It'll sit under your desk.

When that happens, all those massive data center investments in cloud LLMs? Stranded assets.

Because we've seen this pattern before:

| Era | Model |

|---|---|

| 1970s | IBM Mainframe - dumb terminals, all compute centralized |

| 1980s | Personal Computer - compute moves to your desk |

| 2010s | Cloud - coordination moves to data centers |

| 2020s | Cloud AI - "you need our servers for AI" |

| 202X? | Local AI - inference moves back to your desk |

But let's be precise about what actually happened with cloud:

What went to cloud:

- Storage - S3, Dropbox, Google Drive

- I/O and scaling - web servers, APIs, databases

- Many small requests - parallel processing of lightweight tasks

What stayed local:

- CPU/GPU intensive work - video editing, 3D rendering, CAD

- Latency-critical - gaming, real-time applications

- Development environments - IDEs, compilers, local builds

The cloud didn't replace local compute for heavy lifting. It centralized coordination - storage, distribution, scaling. The grunt work stayed on your machine.

Now apply this to AI:

| Will stay in cloud | Will move local |

|---|---|

| Training massive models | Inference (running models) |

| API services where latency doesn't matter | Real-time AI where latency is critical |

| Shared, public models | Private data, sensitive workloads |

The moment local hardware can run inference fast enough - and it's getting close - the economics flip. Why pay per-token to a cloud provider when a box under your desk does it for free?

The cloud AI era might be shorter than the investors hope.

And here's what won't change:

AI amplifies wisdom. AI amplifies stupidity. You choose which.

What happens to the student who copies every assignment from their neighbor? They don't learn. They can't perform when it matters. The problem isn't new - it's the same construction, just a new source to copy from.

The difference? If your neighbor was smart, you could at least rely on them.

A smart neighbor is intellectually interesting. They challenge you. They make you better.

AI can offer something like that experience - a conversation partner that pushes back, asks questions, offers alternatives. But let's be honest: it remains a pale shadow of a real intellectual sparring partner.

XVI. The Bottom Line

Summary

| AI excels at | AI fails at |

|---|---|

| Boilerplate and scripts | System architecture |

| Pattern lookup | Novel problem-solving |

| Code generation | Code understanding |

| Answering "how" | Answering "why" and "should we" |

| Working within paradigms | Questioning paradigms |

The senior engineer who uses AI as a tool will always beat the junior who lets AI drive.

Not because seniors are smarter. Because they have mental models that let them evaluate what AI produces. They can spot when it's wrong. They understand the system it's trying to modify.

The junior who prompts without understanding is building on sand.

The Real 10x Opportunity

Learn the fundamentals. Use AI to accelerate, not to replace understanding.

The 10x boost is real. But only if you know what you're boosting.

Books, independent work, deep understanding - AI doesn't replace any of that. It just makes the gap between those who understand and those who don't even wider.

The question isn't whether AI will change programming.

The question is: Will you be the one driving, or the monkey behind the controls?